Direct-to-Chip Cooling Benefits in Modern Data Centers

Direct-to-chip cooling in data center applications delivers coolant directly to the hottest components of a server (CPUs, GPUs, accelerators) via cold plates mounted on the chips themselves. In addition to performance, direct-to-chip cooling can have positive benefits with respect to energy consumption, of which cooling contributes a sizable percentage. According to the IEA, cooling in data centers ranges from about 7% of total energy consumption in efficient hyperscale data centers to around 25-30% in less efficient operations.

In this article, we'll review a few key points, including:

- Direct-to-chip cooling is critical toward supporting the higher rack densities required for modern data centers, which can range from 60-120kW (and even higher in some cases).

- Direct-to-chip cooling can lead to significant energy consumption reduction stemming from the cooling process.

- Compared with legacy systems, direct-to-chip cooled data centers can see significant improvement in power usage effectiveness (PUE).

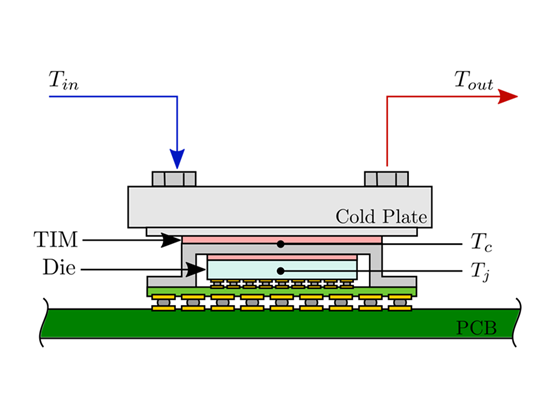

Key components of a Direct-to-Chip Cooling system

- Cold plates (typically copper or copper-aluminum)

- Thermal interface materials (TIM)

- Manifolds, quick-connects, hoses/tubing

- Circulation pump(s)

- Coolant Distribution Unit (CDU) for heat rejection and flow control

Coolant Types

Water-glycol mixtures (like Dober COOLWAVE™ heat transfer fluids), engineered dielectric fluids, or specialized single/two-phase refrigerants.

Two main variants:

- Single-phase Direct to Chip: Coolant remains liquid throughout the loop.

- Two-phase D2C: Coolant boils inside or near the cold plate (liquid → vapor), then recondenses in the CDU, dramatically increasing heat transfer capability for extreme TDPs.

D2C systems are almost always closed-loop (coolant is fully contained and recirculated), minimizing contamination, leakage risk, and water consumption compared to open systems.

By placing the cooling medium only millimeters from the silicon die, D2C drastically reduces thermal resistance compared to air cooling. This is rooted in basic physics: water’s specific heat capacity (~4,180 J/kg·K) and thermal conductivity (~0.6 W/m·K at 20℃) are higher than air (1,005 J/kg·K and ~0.026 W/m·K). In a nutshell, water's ability to store heat (volumetric heat capacity) and the speed at which that heat moves through its medium (thermal conductivity) are greater than those of air.

Modern AI accelerators span 600–1,200 W TDP depending on chip and configuration. NVIDIA's H100 and H200 operate at 700W; the B200 reaches up to 1,000–1,200W in various configurations. AMD's MI300X is rated at 750W, while Intel's Gaudi3 operates at approximately 600W. These thermal loads make liquid cooling essential. D2C cooling is an effective way to cool these chips at full performance without throttling.

Direct-to-chip cooling enables rack densities of 60–120+ kW that are impossible with air cooling, which can typically come in within the range of 20-30kW (depending on the facility). A 2026 study in the journal Energy and Buildings found air-cooled facilities with row-based cooling can support rack densities up to 29.5kW.

How Direct to chip Cooling Efficiency Impacts Data Center Energy Consumption

In a nutshell, direct to chip cooling approach can greatly reduce data center energy consumption compared with traditional air cooling:

- Figures vary, but as much as 40% of energy consumption in data centers is devoted to cooling.

- D2C can reduce cooling subsustem energy consumption by up to 90%, according to a number of industry sources, from corporate to governmental analyses. Significantly less fan power is required at the server level and the CDU uses far less energy than large-scale air handlers.

- Real-world overall facility energy savings with D2C are typically 20–30%, directly translating into power usage effectiveness (PUE) improvements from 1.5–2.0 (air) down to 1.03–1.20 (D2C).With that said, PUE improvements depend significantly on climate, facility design, and IT load.

- High coolant return temperatures (35-50℃ for water-glycol single-phase systems) enable efficient waste-heat reuse or free cooling for most of the year. One NREL study measured return temperatures ranging from 32-48℃ (Sickinger et al.).

Technical & Operational Challenges Specific to Direct to chip cooling

So what do you need to do to implement direct-to-chip cooling process in your data center? While direct-to-chip cooling provides significant benefits in terms of energy use reduction and cooling capacity, there are some challenges associated with rolling it out in your facility.

Here are some things to think about:

- Higher upfront design and installation complexity (cold-plate integration, leak-free quick-connects, manifold routing).

- Leak risk if using conductive coolants — mitigated by dielectric fluids or robust dual-loop/redundant designs.

- Residual heat from VRMs, DIMMs, and drives still requires some airflow or hybrid cooling.

- Retrofitting existing air-cooled servers/racks often requires new motherboards designed or validated for cold plates.

However, as noted previously, the benefits of direct-to-chip cooling are many, including the ability to handle far greater rack densities and a significant reduction in energy use for cooling. When it comes to data center cooling, direct to chip delivers consistent, long-term returns.

Direct-to-Chip vs. air cooling in data centers Comparison Table

|

Metric |

Traditional Air Cooling |

Direct-to-Chip (D2C) Liquid Cooling |

|

Max practical rack power |

20–35 kW |

60–120+ kW |

|

Chip-level TDP supported |

200–350 W |

700–1,200+ W |

|

Cooling energy consumption |

38–40% of total power |

4–8% of total power |

|

Typical PUE |

1.5–2.0 |

1.03–1.20 |

|

Specific heat capacity |

Baseline (air) |

~3,200× higher than air |

|

Waste heat temperature |

30–40 °C (hard to reuse) |

60–75 °C+ (excellent for heat reuse) |

Visit our data center coolants page to learn more about Dober COOLWAVE™ coolants — including our OCP Inspired™ solution, COOLWAVE DC-25 — for direct-to-chip data center cooling applications.

Supporting References

1. IEA. (n.d.). Energy demand from AI – Energy and AI – Analysis - IEA. Energy Demand from AI. https://www.iea.org/reports/energy-and-ai/energy-demand-from-ai

2. Thermal conductivity of water: Temperature and pressure data. The Engineering ToolBox. (n.d.). https://www.engineeringtoolbox.com/water-liquid-gas-thermal-conductivity-temperature-pressure-d_2012.html

3. Tosukhowong, T. (2024, July 18). King of Hill in data center cooling - DCD. King of Hill in data center cooling. https://www.datacenterdynamics.com/en/opinions/king-of-hill-in-data-center-cooling/

4. Ramachandran, K., Stewart, D., Hardin, K., Crossan, G., & Bucaille, A. (2024, November 24). As generative AI asks for more power, data centers seek more reliable, Cleaner Energy Solutions. Deloitte Insights. https://www.deloitte.com/us/en/insights/industry/technology/technology-media-and-telecom-predictions/2025/genai-power-consumption-creates-need-for-more-sustainable-data-centers.html

5. Sickinger, D., Van Geet, O., & Ravenscroft, C. (2014, November). Energy Performance Testing of Asetek’s RackCDU System at NREL’s High Performance Computing Data Center. https://www.nrel.gov/docs/fy15osti/62905.pdf

6. National Renewable Energy Laboratory. (2015, March). NREL. National Renewable Energy Laboratory. https://docs.nrel.gov/docs/fy15osti/63433.pdf

7. Huahua Zhou, Junze Zeng, Mengjie Song, Xiaoqin Sun, Siwei Qin, Adaptability assessment of air-cooling systems for data center with varied rack power densities, Energy and Buildings, Volume 357,

2026, 117201, ISSN 0378-7788, https://doi.org/10.1016/j.enbuild.2026.117201. (https://www.sciencedirect.com/science/article/pii/S0378778826002616)